The third table gives us the coefficients for our regression equation. The low p-value indicates that the probability that the two variables are not related is vanishingly small.

The p-value gives the probability that the slope is zero which would indicate that there is no correlation between the two variables. The F-value in the table has a value of 533.679 and a p-value <0.0001. The second table confirms our hunch of a significant relationship between tree girth and weight. These values are high, indicating that knowing the girth of a tree will allow us to make an accurate estimate of its weight. Furthermore, our r-value is 0.916 and our coefficient of determination, r 2, is 0.840. The first table summarizes the analysis, indicating that there are 104 data points in the analysis. The Statview output for this example is reproduced below. A popular statistics application used in the Biology Department is Statview.

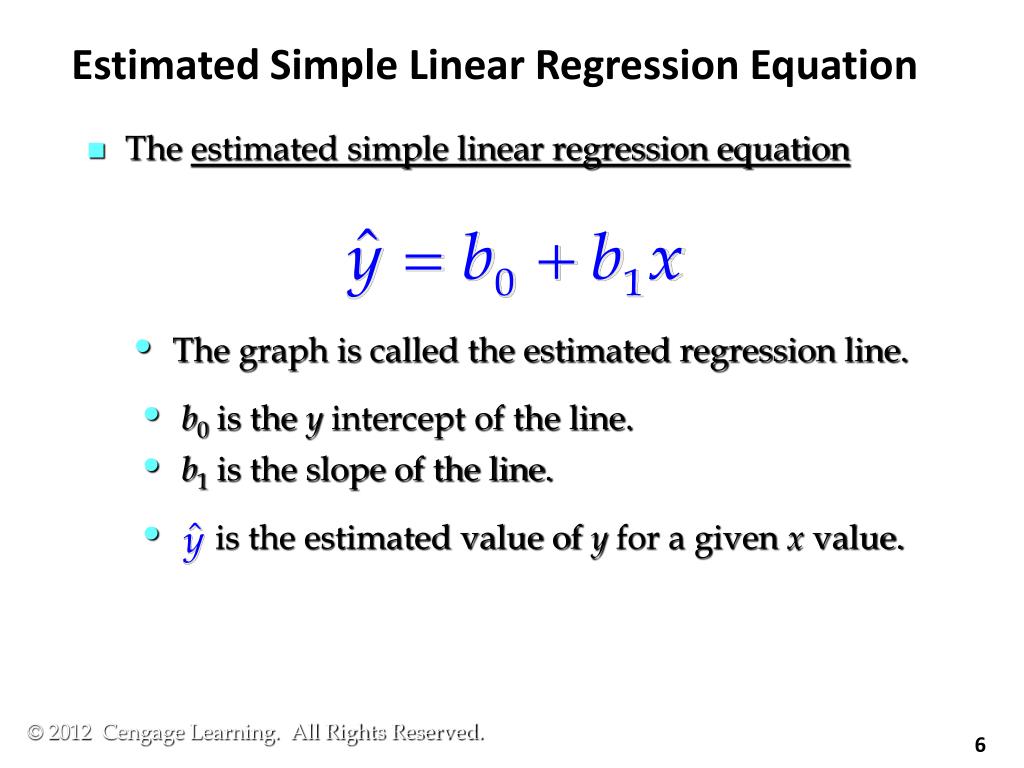

#Given the estimated simple linear regression equation software#

Statistics software and many spreadsheet packages will do a regression analysis for you. The calculation of a regression is tedious and time-consuming.

In the example below, we used regression analysis to explore the relationship between trunk girth and weight of trees, using trunk girth as the independent variable. Particularly when there are many data points used to generate a regression, a regression may be significant but have a very low r 2, indicating that little of the variation in the dependent variable can be explained by variation in the independent variable.

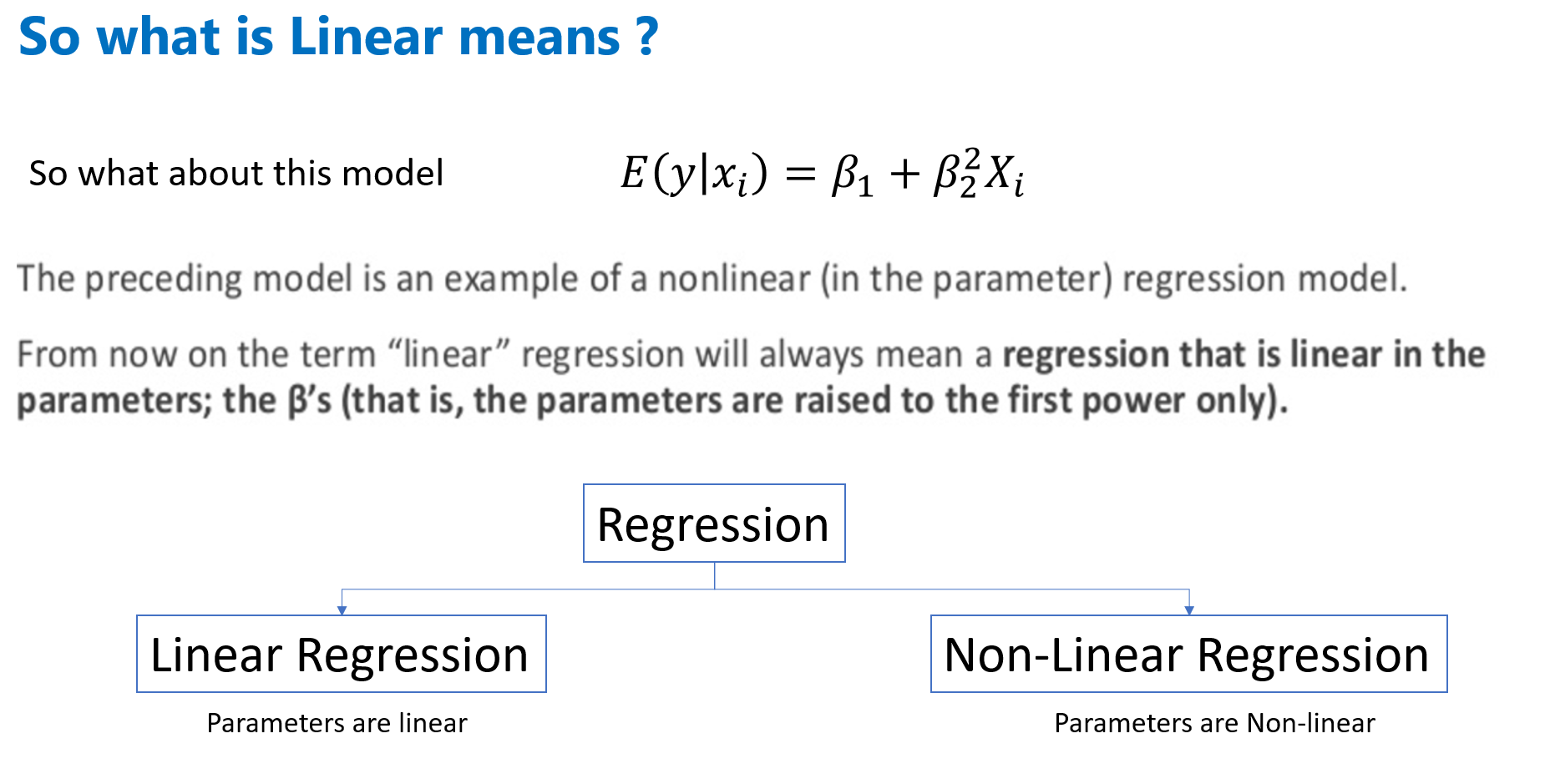

A statistical test called the F-test is used to compare the variation explained by the regression line to the residual variation, and the p-value that results from the F-test corresponds to the probability that the slope of the regression line is zero (i.e., the null hypothesis).Īs the value of r 2 increases, one can place more confidence in the predictive value of the regression line. Regression analysis also involves measuring the amount of variation not taken into account by the regression equation, and this variation is known as the residual. (Note: many biological relationships are known to be non-linear and other models apply.) When a best-fit regression line is calculated, its binomial equation (y=mx+b) defines how the variation in the X variable explains the variation in the Y variable. In simple linear regression, a single dependent variable, Y, is considered to be a function of an independent X variable, and the relationship between the variables is defined by a straight line. The coefficient of determination, r 2, is a measure of how well the variation of one variable explains the variation of the other, and corresponds to the percentage of the variation explained by a best-fit regression line which is calculated for the data. When r=0, there is zero correlation, meaning that the variation of one variable cannot be used to explain any of the variation in the other variable. The value of r can vary between 1.0, perfect correlation, and -1.0, perfect negative correlation. The R-square of 0.77 indicates that Height accounts for 77% of the variation in Weight.Regression involves the determination of the degree of relationship in the patterns of variation of two or more variables through the calculation of the coefficient of correlation, r. The R-square and Adj R-square are two statistics used in assessing the fit of the model values close to 1 indicate a better fit. The coefficient of variation, or Coeff Var, is a unitless expression of the variation in the data. The Root MSE is an estimate of the standard deviation of the error term. Several simple statistics follow the ANOVA table. The corrected total degrees of freedom are always one less than the total number of observations in the data set, in this case. This model estimates two parameters, and thus, the degrees of freedom should be. The model degrees of freedom are one less than the number of parameters to be estimated. The degrees of freedom can be used in checking accuracy of the data and model. The statistic for the overall model is highly significant ( =57.076, <0.0001), indicating that the model explains a significant portion of the variation in the data. Figure 73.1 includes some information concerning model fit.